In the landscape of artificial intelligence (AI), deep learning has emerged as a game-changing technology that is transforming industries ranging from healthcare and finance to entertainment and autonomous vehicles. As one of the most powerful subsets of machine learning, deep learning has enabled computers to perform tasks that were once thought to be uniquely human, such as recognizing speech, understanding images, and making complex decisions.

A fascinating aspect of deep learning is its ability to self-optimize over time. This concept refers to a system’s capacity to improve its performance and decision-making abilities through its own learning processes, without requiring constant manual adjustments or reprogramming. By leveraging massive amounts of data and advanced algorithms, deep learning models can automatically refine their internal structures to increase accuracy, efficiency, and adaptability.

In this article, we will explore the fundamentals of deep learning, the concept of self-optimization, and how these technologies are reshaping the future of AI. We will also examine the impact they are having on industries, the challenges associated with their implementation, and the potential future directions for these groundbreaking technologies.

1. Understanding Deep Learning: An Overview

1.1 What is Deep Learning?

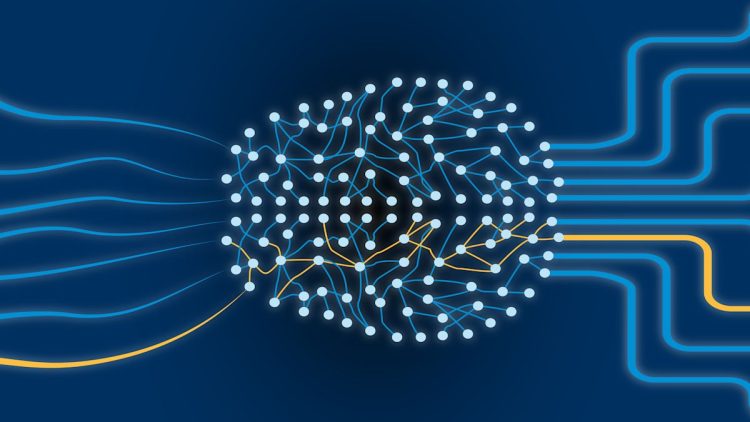

At its core, deep learning is a subset of machine learning, which is itself a branch of artificial intelligence. It involves the use of neural networks, specifically deep neural networks (DNNs), to model and understand patterns in data. Deep learning differs from traditional machine learning by its ability to automatically learn from large volumes of data through multiple layers of computation.

Deep learning algorithms consist of layers of nodes (also known as neurons) that are organized into an architecture inspired by the human brain. These networks are capable of processing vast amounts of data, such as images, text, or sound, by learning from examples and improving their predictions over time.

Key Concepts in Deep Learning:

- Neural Networks: These are the backbone of deep learning. A neural network consists of layers of interconnected nodes, each performing simple computations. These networks process data in a hierarchical manner, with each layer refining the information before passing it to the next.

- Activation Functions: These functions determine the output of each neuron in the network, helping the model learn non-linear patterns and make decisions based on complex data.

- Backpropagation: This is the process by which neural networks update their weights and biases to minimize error. By propagating the error backward through the network, deep learning models learn to optimize their performance.

1.2 Types of Deep Learning Models

There are several types of deep learning models, each designed to address specific tasks or types of data. Some of the most widely used models include:

- Convolutional Neural Networks (CNNs): Primarily used for image recognition and processing, CNNs excel at identifying visual patterns and features. They are used extensively in applications like facial recognition, object detection, and self-driving cars.

- Recurrent Neural Networks (RNNs): These models are designed for sequential data, such as time series or natural language processing (NLP). They are used in applications like language translation, speech recognition, and sentiment analysis.

- Generative Adversarial Networks (GANs): GANs consist of two networks—a generator and a discriminator—that work together to create and evaluate realistic data, such as generating images or video. They are used in creative fields, such as art generation and deepfake technology.

- Transformer Networks: Transformers have revolutionized natural language processing by enabling more efficient handling of sequential data. They power popular models like GPT (Generative Pre-trained Transformer), which is widely used for tasks like text generation, summarization, and language translation.

2. Self-Optimization in Deep Learning

2.1 What is Self-Optimization?

Self-optimization refers to a system’s ability to automatically improve its performance by adjusting its internal processes and parameters. In the context of deep learning, self-optimization enables AI models to refine their architecture, fine-tune their parameters, and adapt to changing conditions without manual intervention. This capability is particularly powerful in dynamic environments where constant reprogramming or retraining is impractical.

Self-optimization is typically achieved through reinforcement learning, hyperparameter tuning, and unsupervised learning. These techniques allow models to improve iteratively by receiving feedback from the environment, learning from it, and adjusting accordingly.

2.2 Key Mechanisms of Self-Optimization in Deep Learning

- Reinforcement Learning (RL): In reinforcement learning, an agent learns to make decisions by interacting with its environment and receiving feedback in the form of rewards or penalties. This type of learning allows deep learning systems to optimize their actions over time to maximize the cumulative reward. One of the most well-known applications of RL is in game playing—for example, AI models like AlphaGo have learned to play complex board games at a superhuman level by continuously optimizing their strategies.

- Hyperparameter Optimization: Deep learning models require a number of hyperparameters, such as learning rate, batch size, and network architecture. The process of hyperparameter optimization involves tuning these parameters to improve model performance. Techniques like grid search, random search, and Bayesian optimization are used to identify the best combination of hyperparameters.

- Meta-Learning (Learning to Learn): Meta-learning, also known as “learning to learn,” is a technique where deep learning models optimize their learning process. Rather than learning specific tasks, these models learn how to adapt to new tasks more efficiently by adjusting their learning strategies. Meta-learning is used in applications where the system must quickly adapt to new data or environments with minimal human intervention.

- Neural Architecture Search (NAS): NAS is an advanced technique used to automatically discover the optimal structure of neural networks. By exploring different architectures and evaluating their performance, NAS allows deep learning models to optimize their architecture in ways that humans may not have considered, leading to better results in specific tasks.

3. Applications of Self-Optimization in AI

3.1 Healthcare: Optimizing Diagnosis and Treatment

In the healthcare industry, AI-powered systems are being used to improve diagnostic accuracy and personalize treatment plans. Deep learning models can analyze medical data such as medical imaging, genetic data, and patient records to identify diseases early, predict outcomes, and recommend the most effective treatments.

- Self-Optimizing Diagnostics: Deep learning models can continually improve their diagnostic accuracy as they process more data. For instance, AI systems used in radiology can automatically adjust their parameters based on feedback from previous cases, enabling them to better identify abnormalities in medical images like X-rays and MRIs.

- Personalized Medicine: Self-optimizing AI models can help identify the best treatment options for individual patients by analyzing data such as genetic markers, lifestyle factors, and past treatment responses. These models can evolve over time to refine their recommendations as new data becomes available.

3.2 Autonomous Vehicles: Navigating Complex Environments

Self-optimization plays a critical role in the development of autonomous vehicles. AI models used in self-driving cars must process vast amounts of data from sensors, cameras, and radar in real time to make split-second decisions about navigation, obstacle avoidance, and route planning.

- Reinforcement Learning in Autonomous Vehicles: Self-driving cars use reinforcement learning to continuously improve their decision-making algorithms. For example, the vehicle may encounter an unfamiliar driving scenario, such as navigating through heavy traffic or adverse weather conditions. By receiving feedback based on its actions (e.g., a reward for safely reaching its destination), the system learns how to adapt its driving strategy over time.

3.3 Finance: Optimizing Trading Strategies

In the financial sector, deep learning and self-optimization are used to enhance trading algorithms, risk management systems, and fraud detection. AI-powered trading platforms use deep learning models to analyze vast amounts of market data, including historical prices, trading volumes, and economic indicators, to make predictions about future market movements.

- Self-Optimizing Trading Algorithms: AI trading systems can automatically adjust their parameters in real-time to optimize trading strategies. By learning from past performance and adapting to changing market conditions, these systems can maximize profitability and minimize risks.

- Fraud Detection: Self-optimizing AI models can improve their ability to detect fraudulent transactions by analyzing transaction patterns and adjusting to new types of fraud techniques. These systems continuously learn from new data, making them more effective at spotting anomalies over time.

3.4 Manufacturing: Optimizing Production Lines

In the manufacturing sector, AI-driven systems optimize everything from production lines to inventory management and quality control. Deep learning models can be used to detect defects in products, predict equipment failures, and streamline operations.

- Predictive Maintenance: Self-optimizing systems can predict when machines are likely to fail and adjust maintenance schedules accordingly. By continuously learning from sensor data, these systems can become increasingly accurate in predicting failures, reducing downtime, and improving overall efficiency.

4. Challenges and Future Directions

4.1 Challenges of Deep Learning and Self-Optimization

While deep learning and self-optimization hold immense potential, there are several challenges to overcome:

- Data Dependency: Deep learning models require large amounts of high-quality data to function effectively. In many industries, obtaining such data can be difficult, especially when dealing with privacy concerns and data scarcity.

- Computational Resources: Training deep learning models is resource-intensive, requiring significant computational power and energy. This can be a barrier for smaller organizations or those with limited access to advanced hardware.

- Interpretability and Transparency: One of the main criticisms of deep learning is its lack of interpretability. Unlike traditional models, deep learning systems are often seen as “black boxes,” making it difficult to understand how they arrive at specific decisions. This poses challenges for industries like healthcare and finance, where understanding the reasoning behind decisions is crucial.

4.2 Future Directions for Deep Learning and Self-Optimization

Looking forward, the future of deep learning and self-optimization is bright. We can expect continued advancements in:

- Explainable AI (XAI): Researchers are working to develop methods for making deep learning models more transparent and interpretable, which will be crucial for applications in sensitive areas like healthcare and finance.

- Federated Learning: This approach allows AI models to be trained on decentralized data, improving privacy and security while still benefiting from vast amounts of data.

- Generalized AI Models: Future developments may lead to more generalized AI systems that can perform a broader range of tasks without being retrained from scratch for each new challenge.

5. Conclusion

Deep learning and self-optimization are at the forefront of AI’s evolution, enabling machines to learn, adapt, and improve their performance autonomously. These technologies are already revolutionizing industries like healthcare, autonomous vehicles, finance, and manufacturing. However, challenges related to data quality, computational resources, and model interpretability remain to be addressed.

As AI continues to advance, the integration of self-optimization capabilities will play an increasingly vital role in creating intelligent, autonomous systems that can enhance efficiency, decision-making, and productivity across a wide range of sectors. The future of deep learning and self-optimization promises exciting opportunities, but it will require careful attention to ethical, technical, and operational challenges to ensure their responsible and effective deployment.